Forecasting Short-Term Urban Dynamics: Data Assimilation for Agent-Based Modelling

Nick Malleson, Alice Tapper, Jon Ward, Andrew Evans

Schools of Geography & Mathematics, University of Leeds, UK

nickmalleson.co.uk

surf.leeds.ac.uk

These slides: http://surf.leeds.ac.uk/presentations.html

Code: https://github.com/nickmalleson/surf

Full paper: http://surf.leeds.ac.uk/p/2017-09-26-essa-da.pdf

Abstract

Background. The `data deluge', coupled with related `smart cities' initiatives, have led to a proliferation of models that capture the current state of urban systems to a high degree of accuracy. However, their ability to forecast future system states appears limited. Agent-based modelling (ABM) is well suited to modelling urban systems, but at present the methodology is seriously limited in its ability to incorporate up-to-date data (such as social media contributions, mobile telephone activity, public transport use, etc.) as they arise in order to reduce uncertainty in model forecasts.

Methods. This paper presents ongoing work that adapts data assimilation techniques from fields such as meteorology in order to allow agent-based models to be optimised using streaming data in real time. Here, a simple example of an agent-based model used to simulate the movement of people as they travel along a street is illustrated. Importantly, the model is optimised dynamically with an ensemble Kalman filter in response to hypothetical pedestrian count data.

Findings. The data assimilation technique reliably estimates the model parameter that it is attempting to optimise. Surprisingly, however, the estimates of the `true' system state that are produced by the model combined with noisy observations are less accurate than the observations in isolation. This is likely an artefact of the specific system under study here. Ultimately, we work towards a combination of ABM and data assimilation methods that will be able to assimilate streaming `smart cities' data into models in real time.

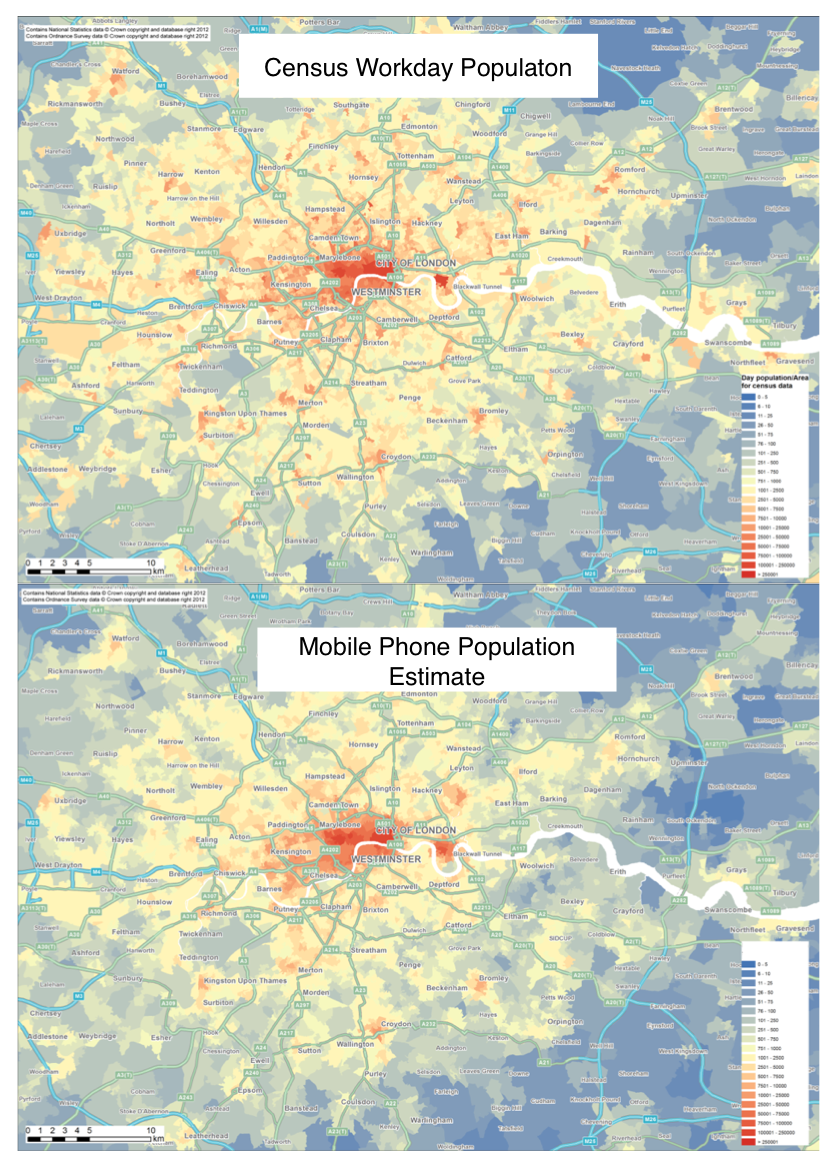

How many people are there in Trafalgar Square right now?

We need to better understand urban flows:

Crime – how many possible victims?

Pollution – who is being exposed? Where are the hotspots?

Economy – can we attract more people to our city centre?

Health - can we encourage more active travel?

Simulating Urban Flows (surf) - 3 year research project funded by the ESRC

Overview

Background - smart cities, the data deluge, and forecasting

ABM for a geographical simulation of urban flows

Major hurdle: real time calibration

Dynamic data assimilation for ABM

with an ensemble Kalman filter (EnKF)

Towards a real-time city simulation

Help and advice please!

Smart cities and the data deluge

Abundance of data about individuals and their environment

"Big data revolution" (Mayer-Schonberger and Cukier, 2013)

"Data deluge" (Kitchin, 2013a)

Smart cities

cities that "are increasingly composed of and monitored by pervasive and ubiquitous computing" (Kitchin, 2013a)

Large and growing literature

What about forecasting?

Abundance of real-time analysis, but limited forecasting. E.g.:

MassDOT Real Time Traffic Management system (Bond and Kanaan, 2015)

Detect vehicles with Bluetooth to analyse current traffic flows

Centro De Operacoes Prefeitura Do Rio (in Rio de Janeiro)

Advertise some predictive ability, but sparse detail

City dashboards

Forecasting ability is surprisingly absent in the literature

(correct me if I'm wrong!)

Why so little evidence of forecasting?

Proprietary systems? Company secrets?

A methodological gap?

Machine learning will probably help

E.g. short-term traffic forecasting (Vlahogianni et al. 2014)

But black box is a drawback - How to run diverse scenarios?

Maybe agent-based modelling could have the answer

ABM Problems

1. Computationally Expensive

Not amenable to machine-led calibration

2. Data hungry

Need fine-grained information about individual actions and behaviours

3. Divergent

Usually models represent complex systems

Projections / forecasts quickly diverge from reality

3. Divergence

Complex systems

One-shot calibration

Nonlinear models predict near future well, but diverge over time.

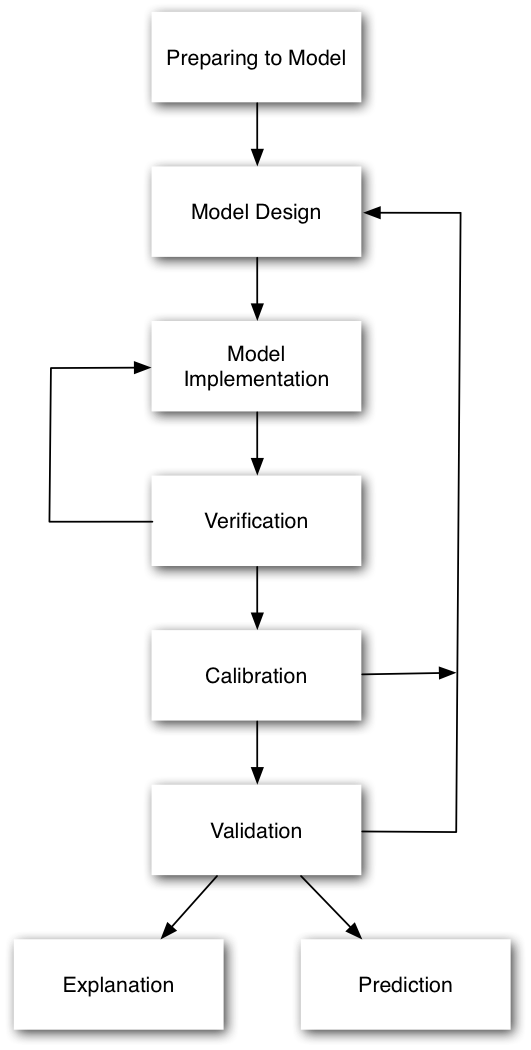

3. Divergence

Drawback with the 'typical' model development process

Waterfall-style approach is common

Calibrate until fitness is reasonable, then make predictions

But we can do better:

Better computers

More (streaming) data

Methodological gap

Dynamic Data Assimilation

Used in meteorology and hydrology to constrain models closer to reality.

Try to improve estimates of the true system state by combining:

Noisy, real-world observations

Model estimates of the system state

Should be more accurate than data / observations in isolation.

Dynamic Data Assimilation

How?

Particle filters

Indoor footfall (Rai and Hu, 2013.; Wang and Hu, 2015)

Kalman Filter

Air traffic (Chen et al., 2012)

Ensemble Kalman Filter (EnKF)

Pedestrian footfall (Ward et al., 2016)

Sequential Monte Carlo (SMC)

Wildfire (Hu, 2011; Mandel et al., 2012)

Approximate Bayesian Computation

?

Ensemble Kalman Filter (EnKF)

Maths

Broad literature, but generally tied to mathematical models (e.g. differential equations and linear functions)

Working with a mathematician to do the hard work!

In all its glory: Ward et al., (2016)

Advantages

Similar to Kalman Filter (best in class)

But better for nonlinear systems

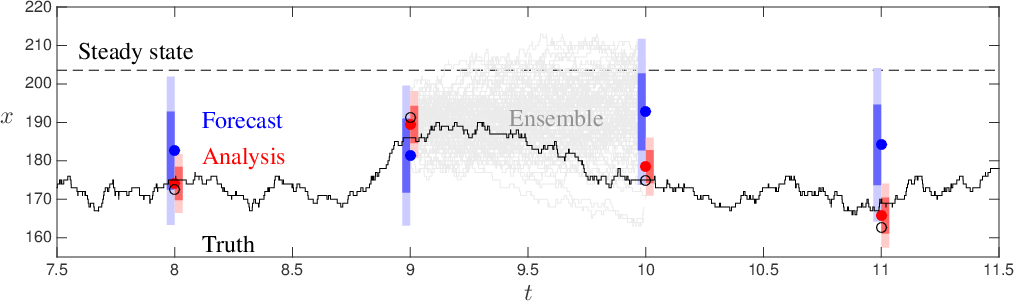

Ensemble Kalman Filter - Basic Process

1. Forecast.

Run an ensemble of models (ABMs) forward in time.

Calculate ensemble mean and variance

2. Analysis.

New 'real' data are available

Integrate these data with the model forecasts to create estimate of model parameter(s)

Impact of new observations depends on their accuracy

3. Repeat

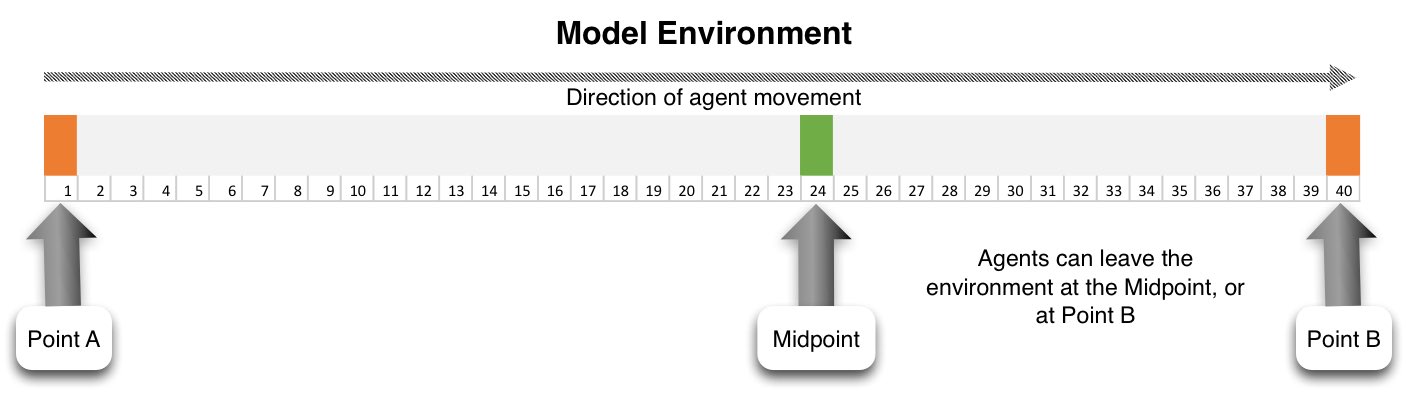

Ensemble Kalman Filter (EnKF)

Outline

Experiment with an EnKF

Very simple ABM - people walking along a street

Every hour, x people begin at point A

CCTV Cameras at either end count footfall

Some people can leave before they reach the end (bleedout rate)

Complete model state can be captured in a state vector.

Aim: Estimate the number of people who will pass camera B

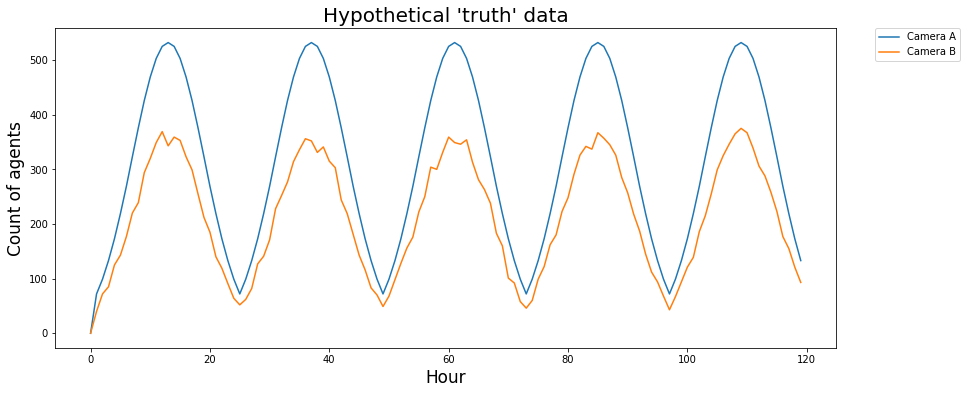

Hypothetical 'Truth' Data

Use the model to first generate a hypothetical reality

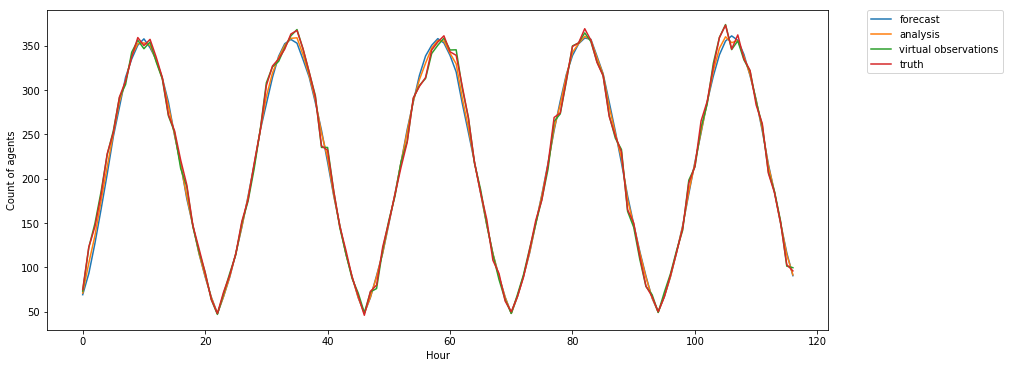

(Preliminary) Experimental Results

Summary

Number of agents who pass camera B:

forecast, analysis, virtual observations, truth

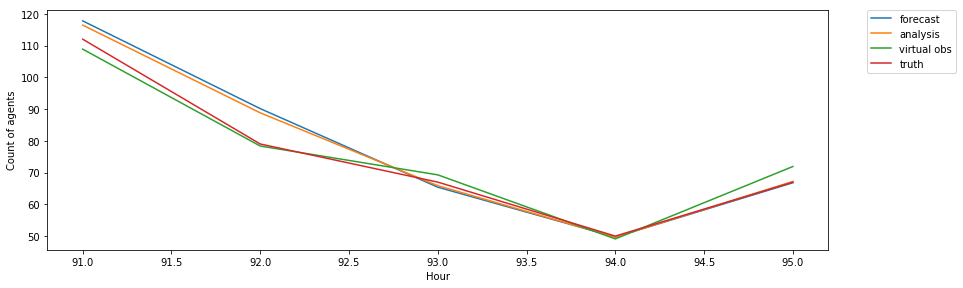

(Preliminary) Experimental Results

Summary

Number of agents who pass camera B

In short: data assimilation only marginally changed the system state.

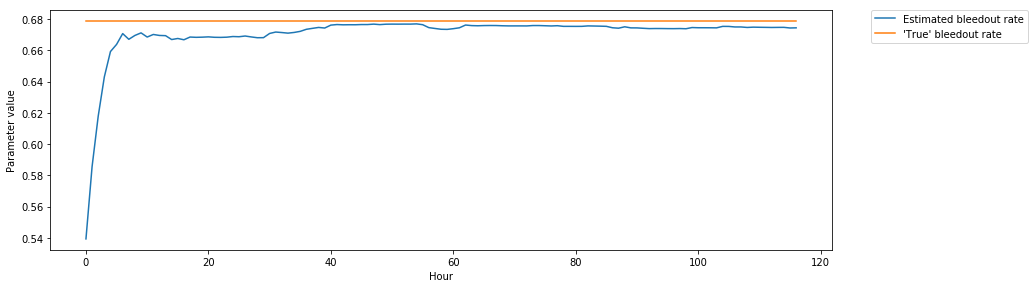

(Preliminary) Experimental Results

Parameter Estimation

Model parameters can be included in the model state vector.

Can the EnKF find the single model parameter? (The bleedout rate).

(Preliminary) Experimental Results

Quantifying Error (RMSE)

Forecast: 9.5; Analysis: 6.5; Observation: 3.0.

Surprising because we would expect Analysis < Observation

Virtual observations are closer to 'truth' than the analysis :-(

This is probably due to the degree of randomness in the model - intelligent guesses will rarely be closer to reality than observations (even noisy observations).

EnKF estimates the model parameter (bleedout rate) accurately :-)

Conclusion and Outlook

Surprising lack of smart cities forecasting

ABM potentially able to combine 'big' data to make more reliable short-term predictions

Lots of work needed to adapt data assimilation techniques

Future: a holistic city model, estimating the current state and predicting future states.

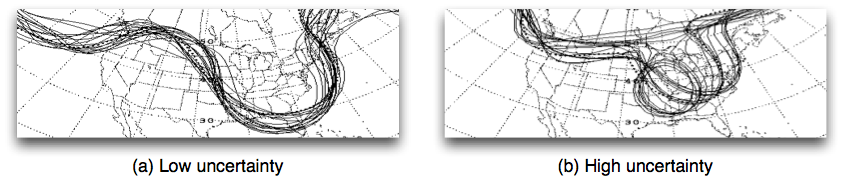

Conclusion and Outlook

Lots that we can borrow from the hard sciences

Emulators

Simplified versions of a computationally-expensive model that can be executed quickly

Good for exploring the parameter space

Uncertainty

Improved methods for quantifying and visualising uncertainty.

Future Work: DUST

Data Assimilation for Agent Based Models: Applications to Civil Emergencies

Recently funded by the ERC (Starting Grant), €1.5M over 5 years

For more information:

https://erc.europa.eu/news/erc-2017-starting-grants-highlighted-projects

References

Bond, R., and Kanaan, A. (2015) MassDOT Real Time Traffic Management System. In Planning Support Systems and Smart Cities, S. Geertman, J. Ferreira, R. Goodspeed, and J. Stillwell, Eds. Springer International Publishing, pp. 471–488.

Kitchin, R. (2013a). Big data and human geography Opportunities, challenges and risks. Dialogues in Human Geography, 3(3):262–267.

Kitchin, R. (2013b). The Real-Time City? Big Data and Smart Urbanism. SSRN Electronic Journal.

Mayer-Schonberger, V. and Cukier, K. (2013). Big Data: A Revolution That Will Transform How We Live, Work and Think. John Murray, London, UK

Ward, Jonathan A., Andrew J. Evans, and Nicolas S. Malleson. 2016. Dynamic Calibration of Agent-Based Models Using Data Assimilation. Open Science 3 (4). doi:10.1098/rsos.150703.

Forecasting Short-Term Urban Dynamics: Data Assimilation for Agent-Based Modelling

Nick Malleson, Alice Tapper, Jon Ward, Andrew Evans

Schools of Geography & Mathematics, University of Leeds, UK

nickmalleson.co.uk

surf.leeds.ac.uk

These slides: http://surf.leeds.ac.uk/presentations.html

Code: https://github.com/nickmalleson/surf

Full paper: http://surf.leeds.ac.uk/p/2017-09-26-essa-da.pdf